With no budget, no team, and no allocated time, I built a behavioral research lab inside a Fortune 500 company — then used it to conduct both industry-grade exploration for CES and a statistically rigorous doctoral experiment. The finding: spatial interaction design measurably shifts motivation quality (p=.03). The method: transferable to any emerging technology.

As a researcher at Harman International — a major Samsung subsidiary and supplier to luxury automotive manufacturers — I was invited into a meeting with a strategic problem: senior leadership needed to envision autonomous vehicle experiences for their annual CES showcase, but the initiative had no budget, no team, and no formal structure.

The initial scope was secondary research on technology trends. But I recognized the limitation — we weren't uncovering anything surprising. What we needed was primary research, but stakeholders couldn't envision how to collect data from sketches still in development.

During a meeting at the company's design headquarters with the VP — a renowned, award-winning designer — I proposed building a low-fidelity prototype to collect user feedback. His response was direct: "If you know what you are doing, go ahead."

My strategic decision: use the same experimental lab to serve both rapid industry insights and rigorous doctoral research — demonstrating that industry and academic research can exist in productive synergy.

The automotive context is the specific scenario, but the capabilities demonstrated are universal: building research infrastructure from zero resources, running experiments that produce statistically defensible evidence, and doing it at speed. These transfer directly to:

Privacy vs. social features in financial apps — the same spatial design question this study answers

How interface design affects motivation for long-term behavior change — the exact dependent variable tested here

Validating experiences for products that don't fully exist yet — provotyping's core purpose

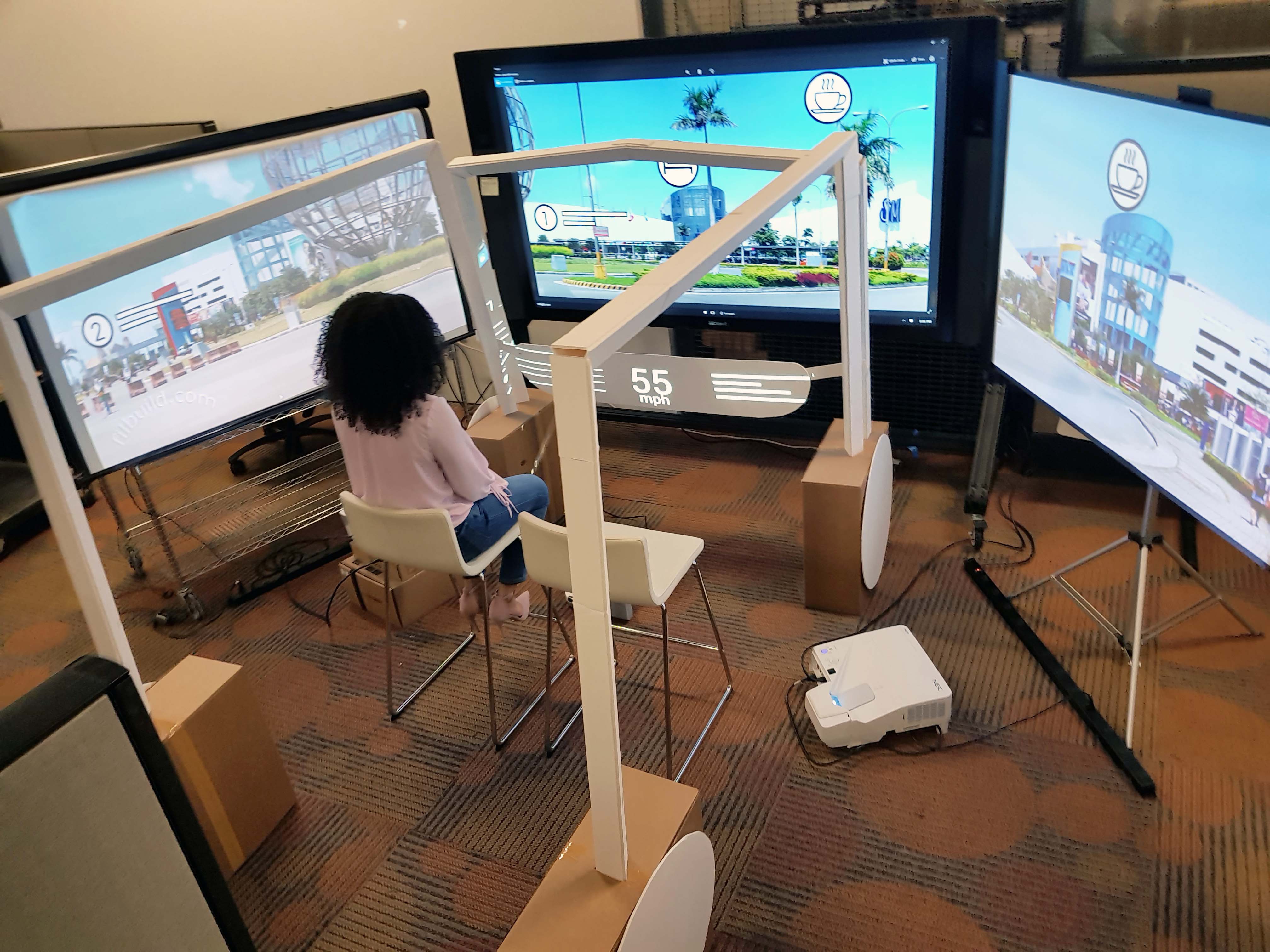

I built an immersive autonomous vehicle simulation using "smoke and mirrors" prototyping — foam board, projectors, screens, speakers, and cameras creating experiences that feel real without building actual technology. Results were ready within two weeks — from initial meeting to lab construction to complete data collection.

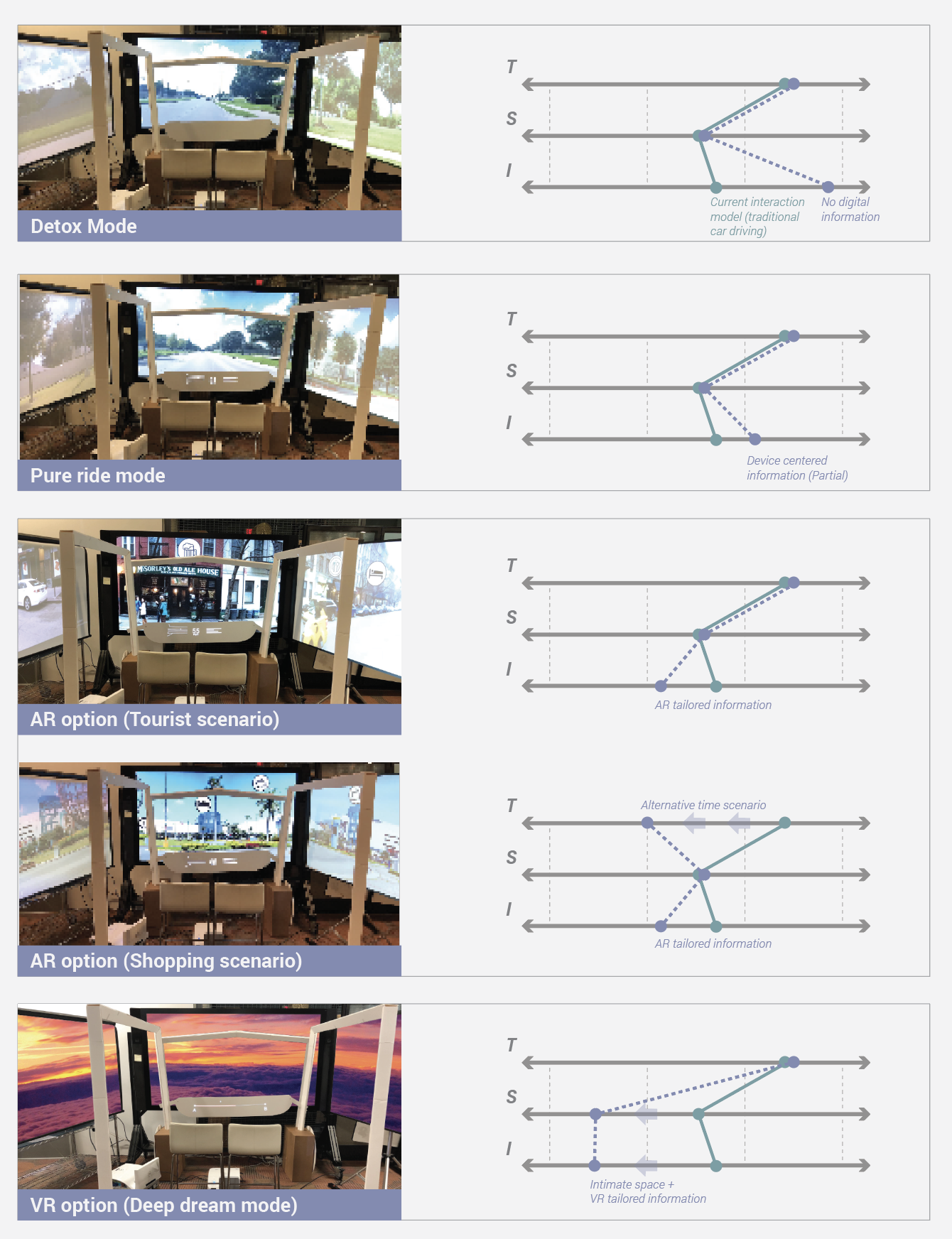

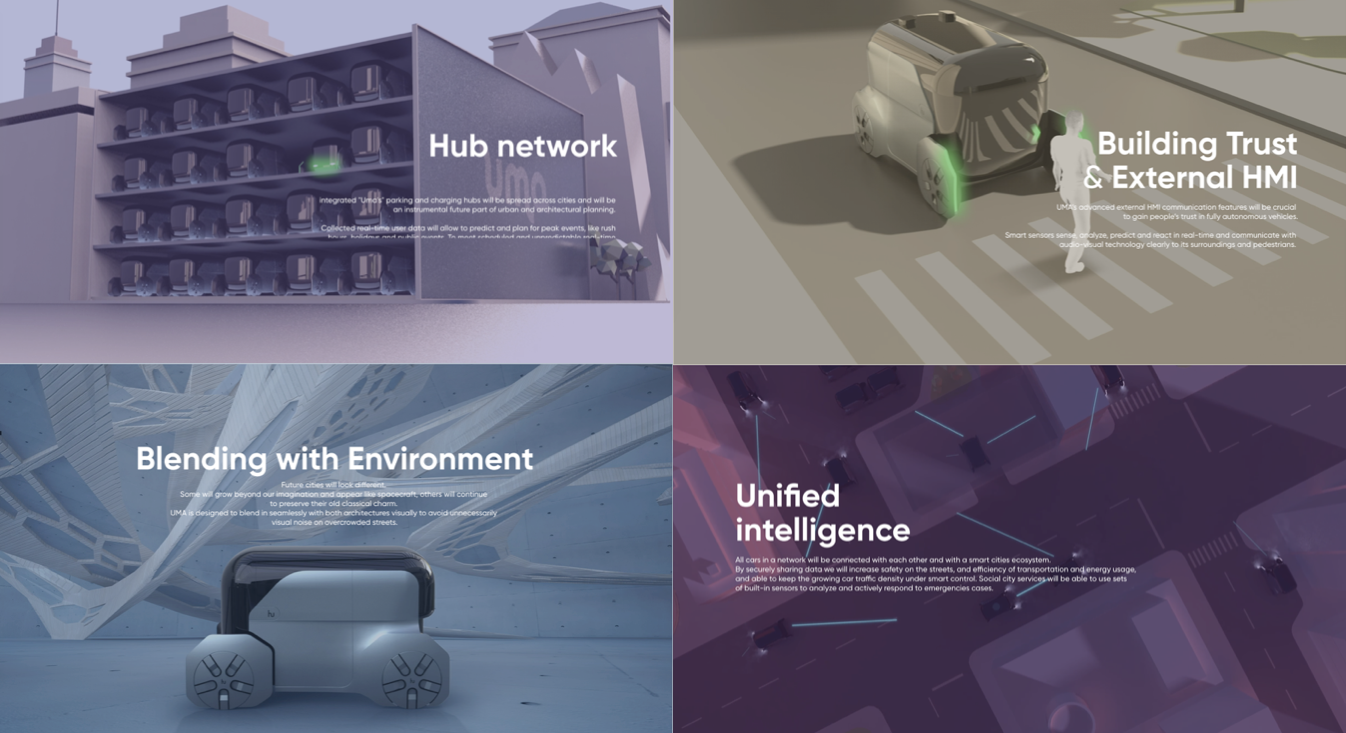

Using my doctoral provotyping methodology, I created five interaction modes exploring the transition from current driving culture to fully autonomous experiences:

No data displayed. Challenged car culture expectations — could users tolerate a blank screen?

Testing the absolute minimum information threshold for user comfort in autonomous transit.

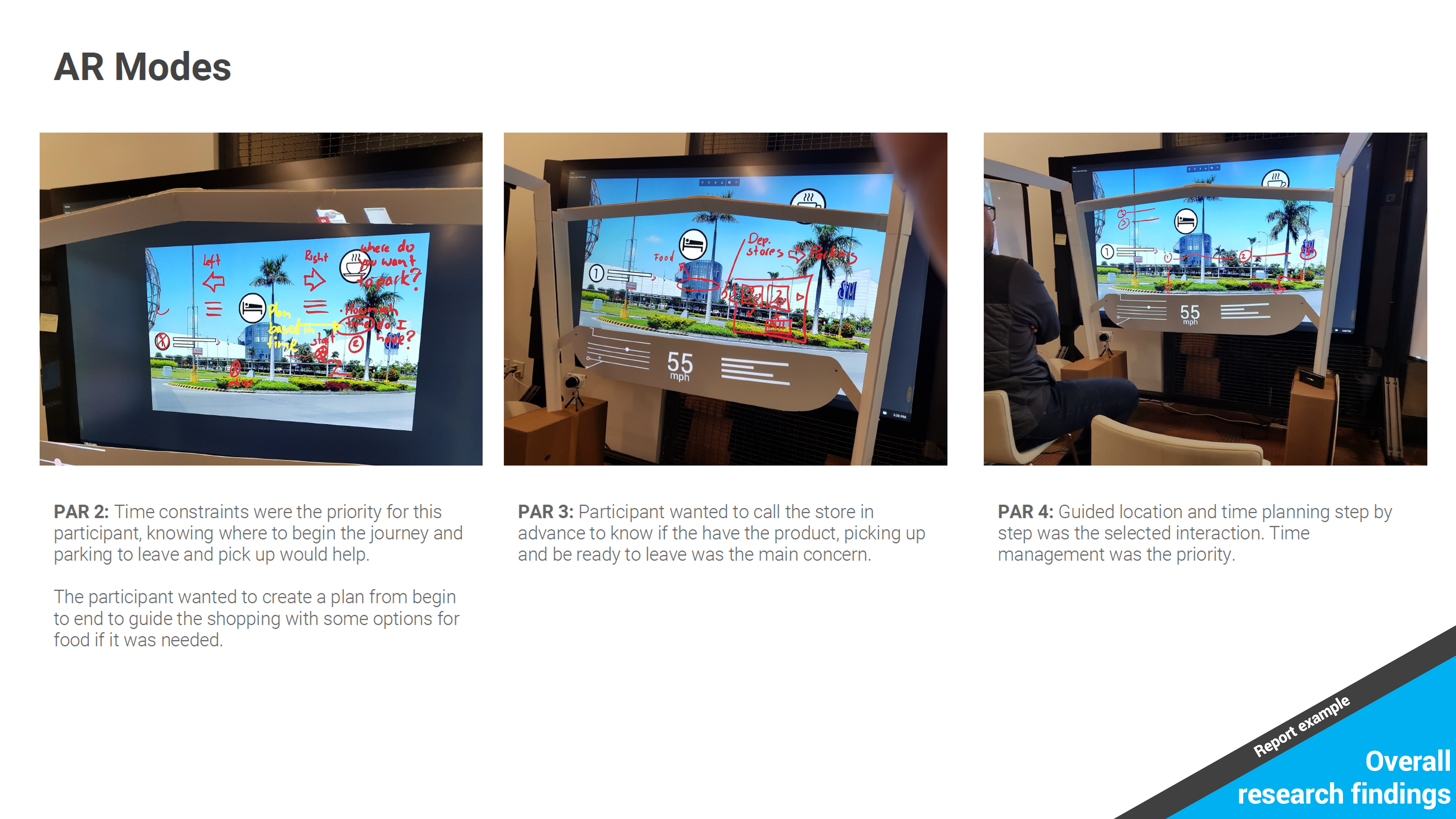

Tourist, Shopping, and Deep Dream modes testing information density from augmented to fully virtual.

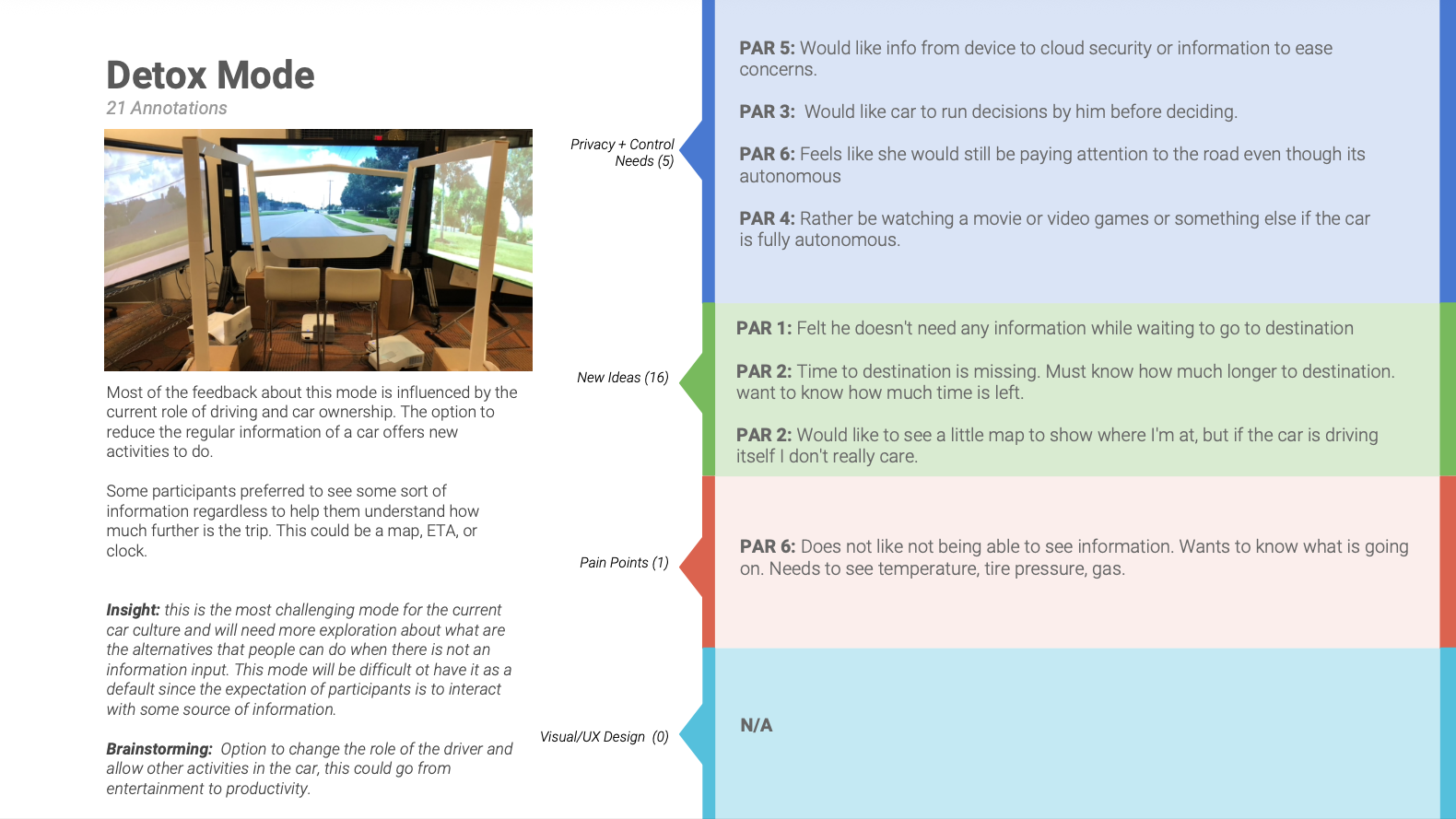

Provotyping reveals what people need to transition from the current paradigm — not what they'll ultimately need. Participants demanded car data (speed, health) despite full autonomy making it irrelevant.

Despite emotional design framing, participants prioritized time management. Even when freed from driving, efficiency remained the dominant value.

In shared vehicles without designated drivers, voice raises control questions — tone becomes a resource for authority, potentially reducing individual motivation.

Participants appreciated personalization but feared residual data in non-owned vehicles. Transparency about data deletion is critical for shared technology acceptance.

Building on the exploratory phase, I designed a controlled mixed methods experiment — the core of my doctoral dissertation — testing whether interaction design attributes can function as controlled independent variables to test behavioral theories.

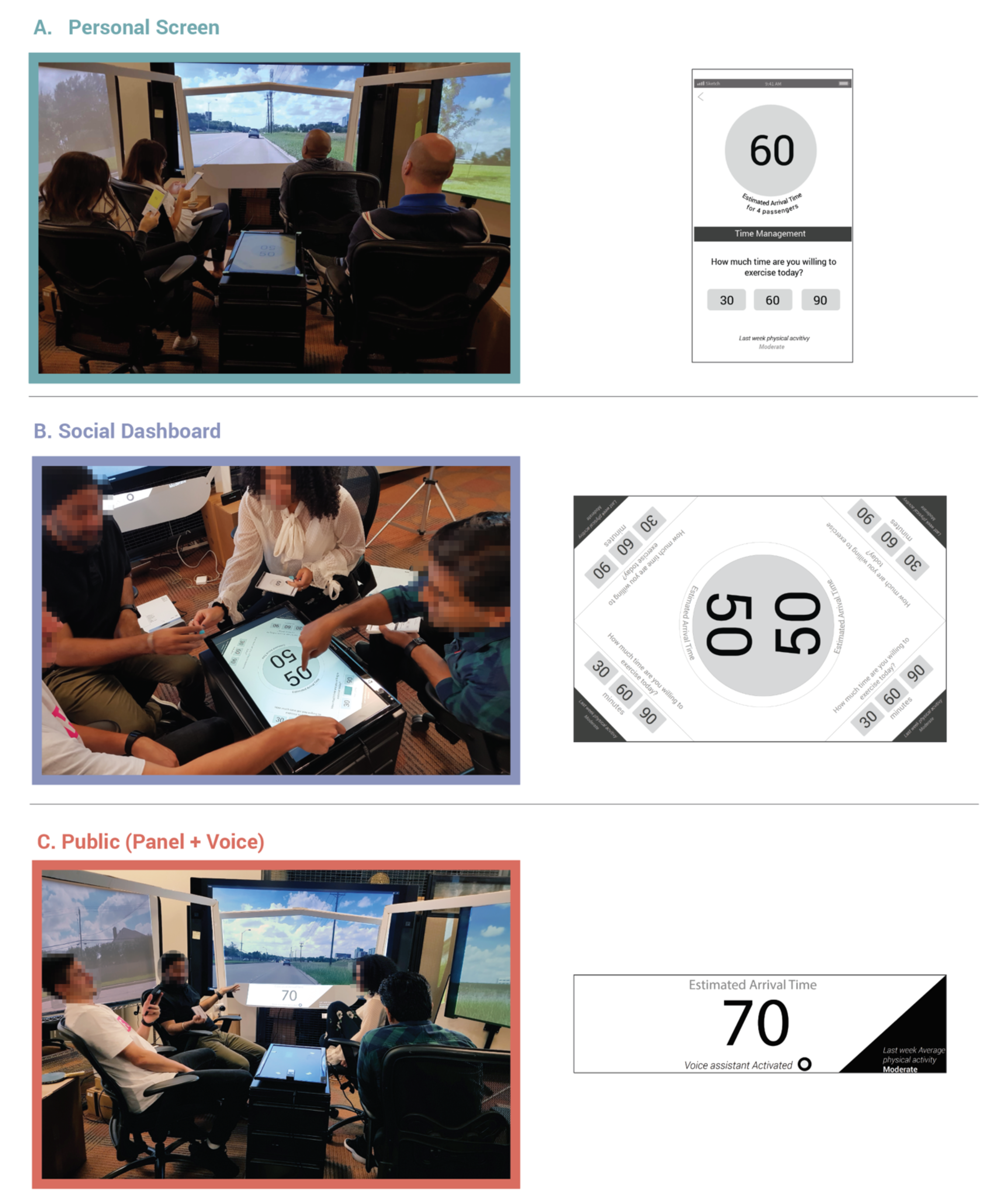

Independent variable: Proxemics (spatial interaction design) manipulated through three prototypes:

Dependent variable: Self-Determination Theory motivation regulations (intrinsic vs. extrinsic)

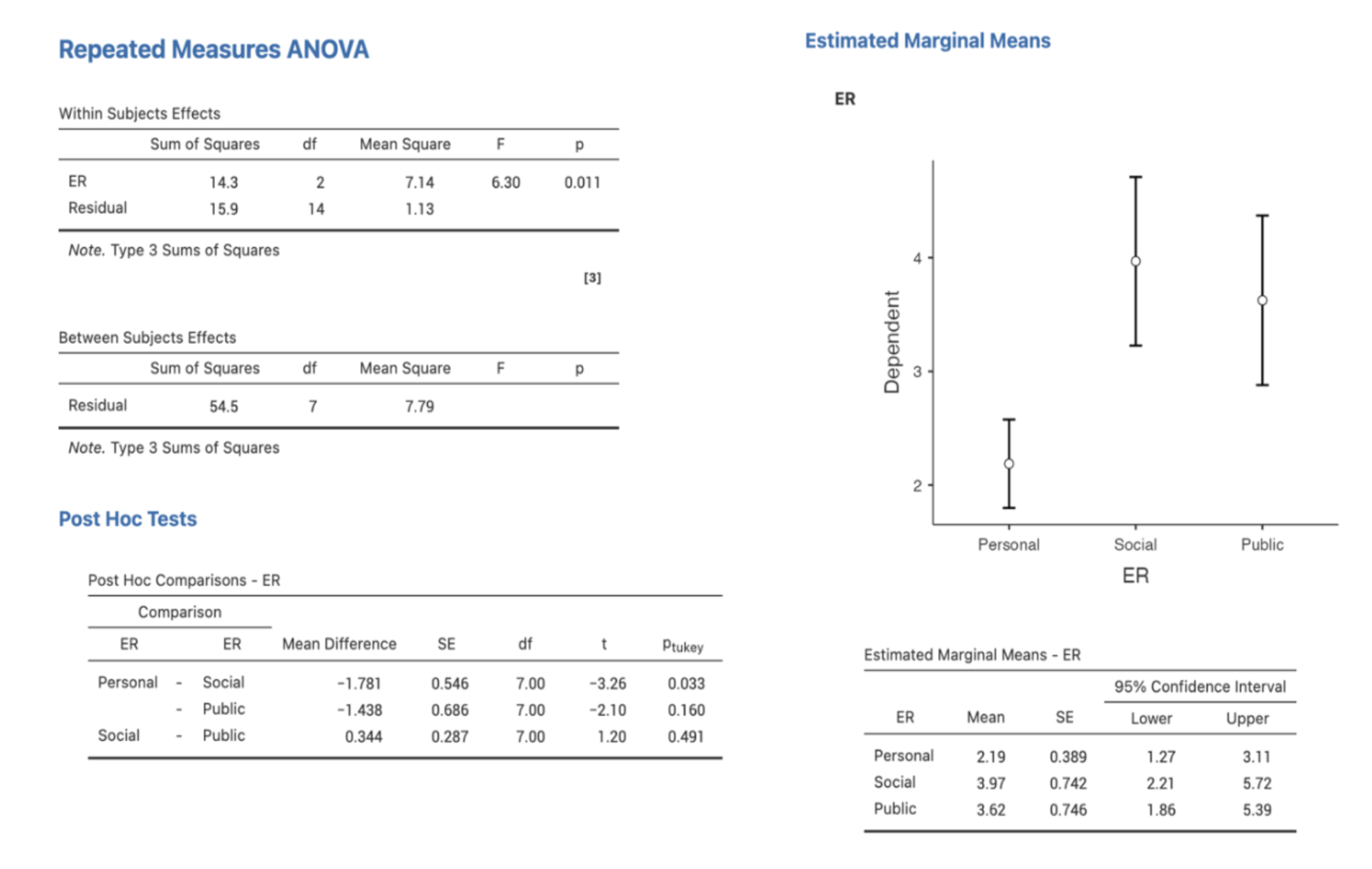

Method: Repeated measures ANOVA + semi-structured interviews (n=8)

This isn't academic abstraction. The question — "does making an interface more private or more social change how motivated people feel?" — applies to every product with social features, shared experiences, or privacy decisions.

The experimental rigor means the answer isn't "we think so" or "users told us" — it's "we can prove it with p=.03." That's the difference between a research opinion and evidence a VP can stake a product decision on.

Participants felt significantly less externally regulated (motivated by rewards/punishments) in the personal/intimate condition compared to the social condition. In other words: private interfaces shifted motivation toward more self-determined engagement — critical for long-term behavior change sustainability.

What this means practically: When you design an interface to feel more private and personal, users make more self-determined decisions. When you make it social and public, external pressure increases. Neither is inherently better — but designers need to know which they're creating and why.

The key nuance: some individuals benefited from positive peer pressure. This means the design recommendation isn't "always go private" — it's "provide adjustable privacy controls" and understand the psychological tradeoffs of each decision.

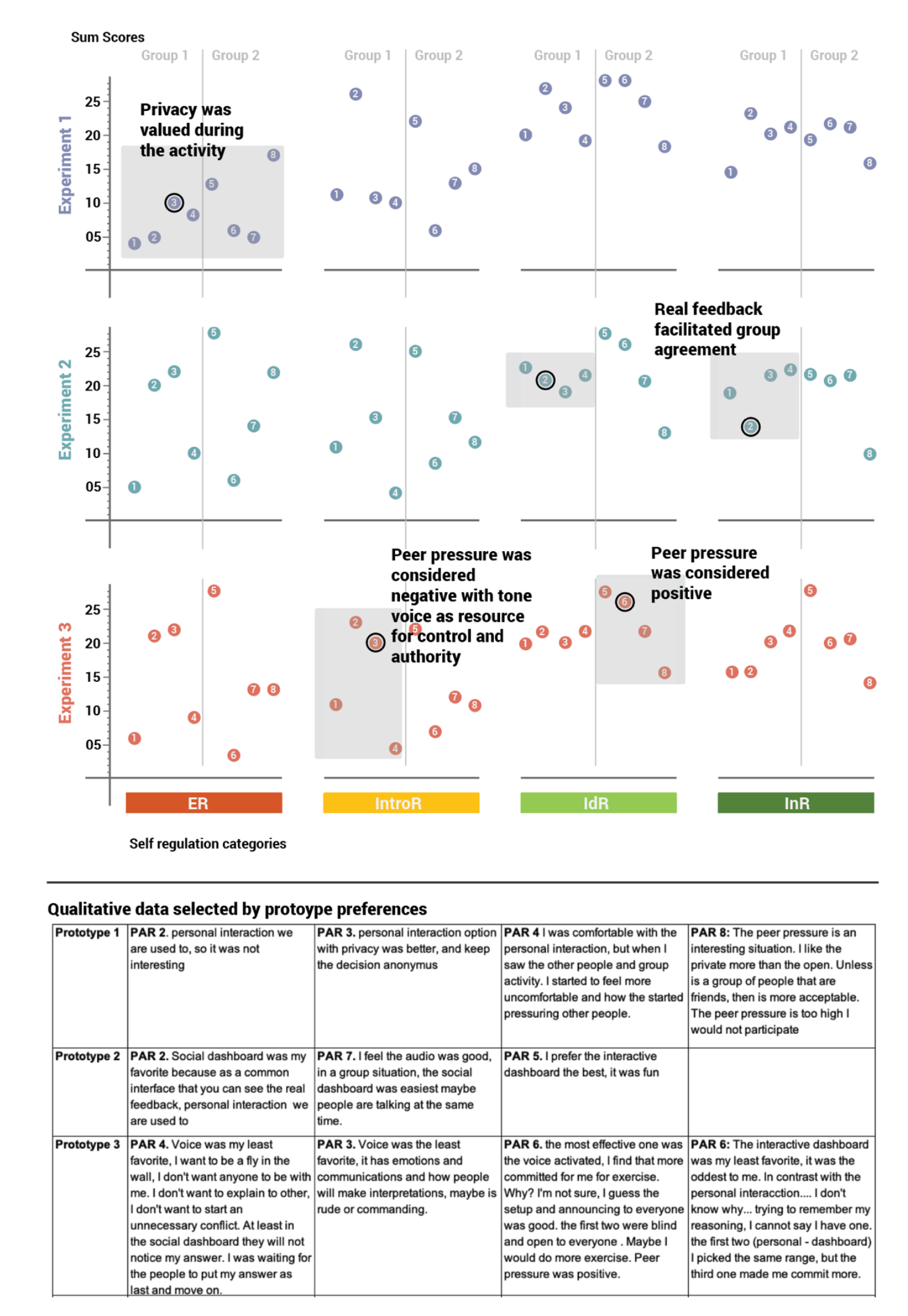

The power of this research lies not in using one method — but in their strategic integration. Quantitative data showed which design had statistical impact. Qualitative data explained why and how. I used extreme quantitative scores to strategically select which participant comments to examine — making qualitative analysis efficient and targeted rather than exhaustive.

"Smoke and mirrors" prototyping — foam board, projectors, screens — produced insights that challenged the assumptions of an award-winning design team. Methodological clarity matters more than resource availability.

The same lab served both rapid CES insights and a peer-reviewed doctoral experiment. The key was designing research that works at multiple levels of rigor simultaneously — not choosing between speed and depth.

Using quantitative scores to guide qualitative analysis is dramatically more efficient than analyzing all data equally. Statistical patterns point you toward the right participant stories to examine.

This is the core contribution: proving that interaction design attributes can function as controlled variables to test behavioral theories. UX decisions aren't preferences — they're interventions with measurable outcomes.

Moving fluidly between rapid qualitative insights for product iteration, rigorous quantitative validation when strategic decisions need statistical confidence, and mixed methods integration when neither tradition alone suffices. This combination doesn't require autonomous vehicles — it works with any emerging technology where you need to validate experiences before they fully exist.

See other projects demonstrating strategic research and systems thinking

← Back to All Projects