ATLAS had been serving Audi America's dealership network for a decade, but its social features had near-zero engagement — even among the few executives with early access. As first UX researcher at IXIS Digital, I discovered the problem wasn't missing features. It was the fear of looking incompetent.

ATLAS was a decade-old business intelligence platform designed to serve 400+ Audi America car dealerships — the flagship product of IXIS Digital's enterprise ambitions. The platform had been deployed internally with some Audi executives granted early access, but despite incorporating social features years earlier (chat, insight posting, activity feeds), engagement remained near zero. Almost no one was using it.

Leadership's initial hypothesis was familiar: we need more social media mechanics — notifications, gamification, performance dashboards.

Joining as their first dedicated UX researcher, I spent the first weeks conducting semi-structured interviews with data analysts across the platform. What I found reframed the entire product strategy: professional data sharing operates under fundamentally different psychological dynamics than social platforms.

The pressure to appear smart, the fear of looking confused, the absence of psychological safety — these weren't technology problems. They were human problems masquerading as feature requests.

Through weekly semi-structured interviews with data analysts, a pattern crystallized: people weren't refusing to share because the platform lacked features. They were paralyzed by performance anxiety.

"Sharing 'insights' meant I had to sound brilliant. What if I'm wrong? What if my analysis is obvious? I'd rather say nothing."

— Senior Data Analyst, IXIS Platform UserThe platform reinforced a toxic dynamic: only share if you're certain, only speak if you're smart. This is the opposite of how learning organizations function.

I designed three deliberately low-stakes entry points for participation:

"I don't understand this, can anyone help?" — Normalizing not-knowing as a legitimate form of participation.

"Something feels off here, what am I missing?" — Creating space for productive doubt without requiring proof.

"Here's a half-formed idea, what do you think?" — Legitimizing incomplete thinking as contribution.

This wasn't a UI change — it was a psychological safety intervention grounded in behavioral science. Lower the stakes of participation, normalize uncertainty, reward collaborative thinking over individual brilliance.

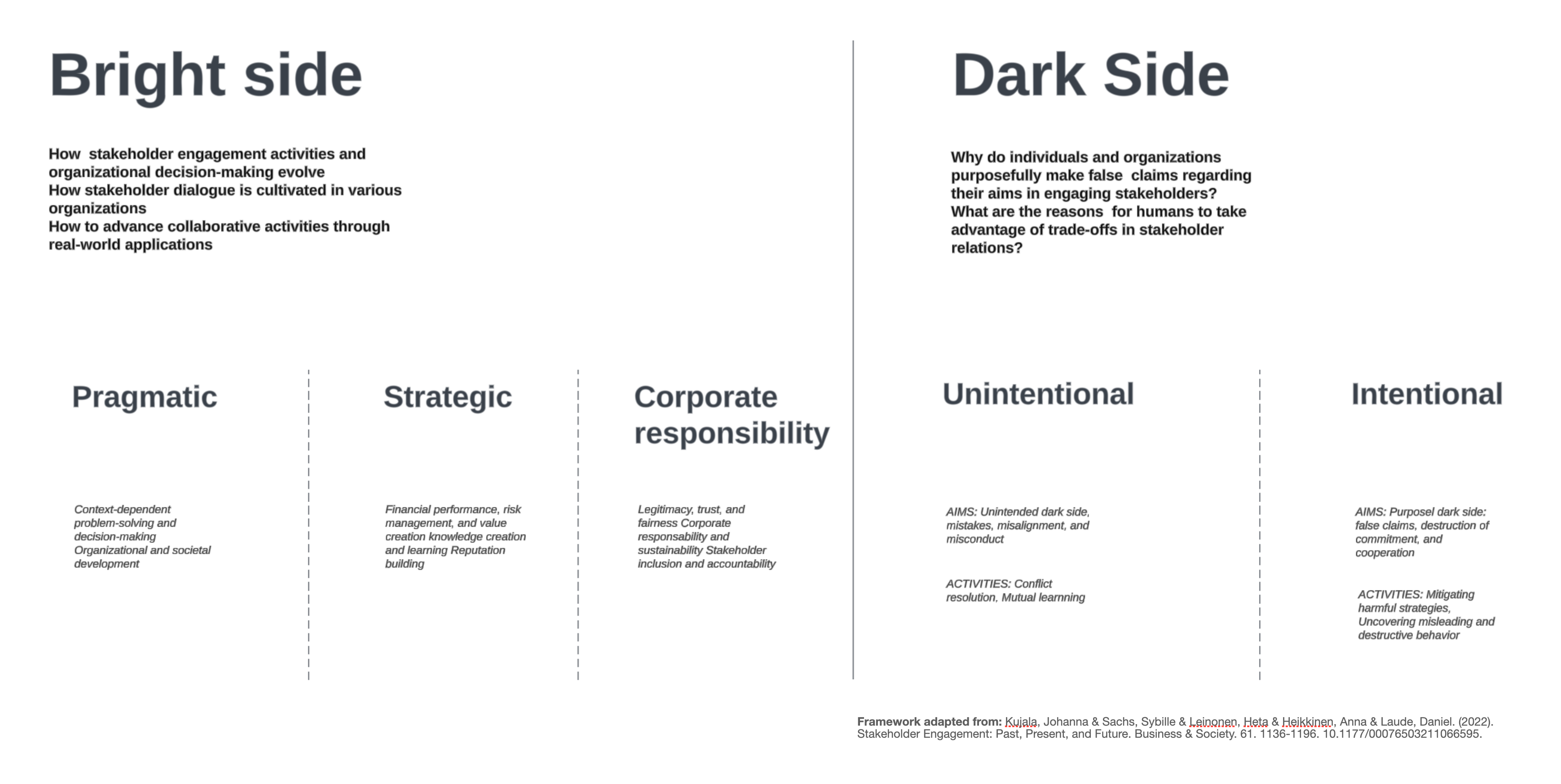

Drawing from stakeholder engagement research — particularly the bright/dark side typology identified in Kujala, Sachs, Leinonen, Heikkinen & Laude's systematic review of engagement literature (Business & Society, 2022) — I developed a conceptual framework showing how engagement activities exist on a spectrum, and why copying social media tactics risked pushing professional collaboration toward destructive patterns.

I used my doctoral methodology — provotyping (provocative prototyping) — to surface stakeholder assumptions that would never emerge in traditional requirements gathering. Early, deliberately rough prototypes forced conversations about questions the team hadn't thought to ask:

These provocations revealed valuable insights about the organization: IXIS was navigating a natural tension between innovation speed and methodological rigor. The team brought together diverse backgrounds — from PhD-level design thinking to decade-tenured operational expertise — creating a dynamic where different perspectives on "moving fast" coexisted productively.

My goal was to introduce a research-driven approach that brought complexity to the surface early, when addressing it is most cost-effective. In a startup environment, this meant adapting academic rigor to business velocity.

Every finding required three translations: academic rigor for my research integrity, business impact language for executive buy-in, and implementation clarity for engineering. This constant code-switching became one of the most valuable skills I developed.

To address ATLAS's low internal engagement, I created Research Bytes — a weekly "dosage of research insights and inquiries" distributed through the company intranet and Slack. This wasn't traditional research reporting. It was a strategic communication infrastructure designed to challenge assumptions and start conversations across the entire organization.

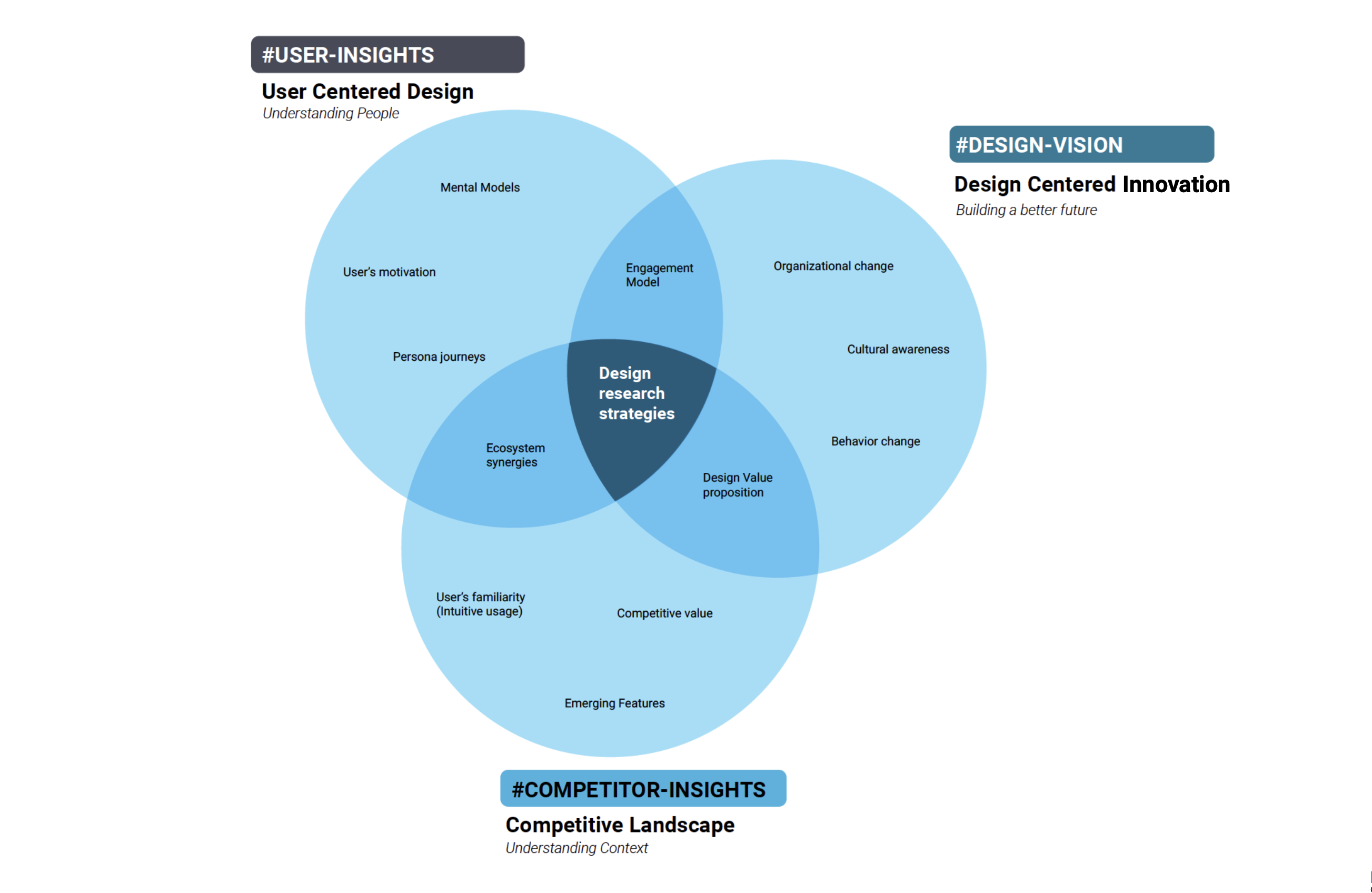

Understanding people: mental models, motivations, persona journeys, engagement patterns

Understanding context: emerging features, competitive value, ecosystem synergies

Building better futures: organizational change, cultural awareness, behavior change

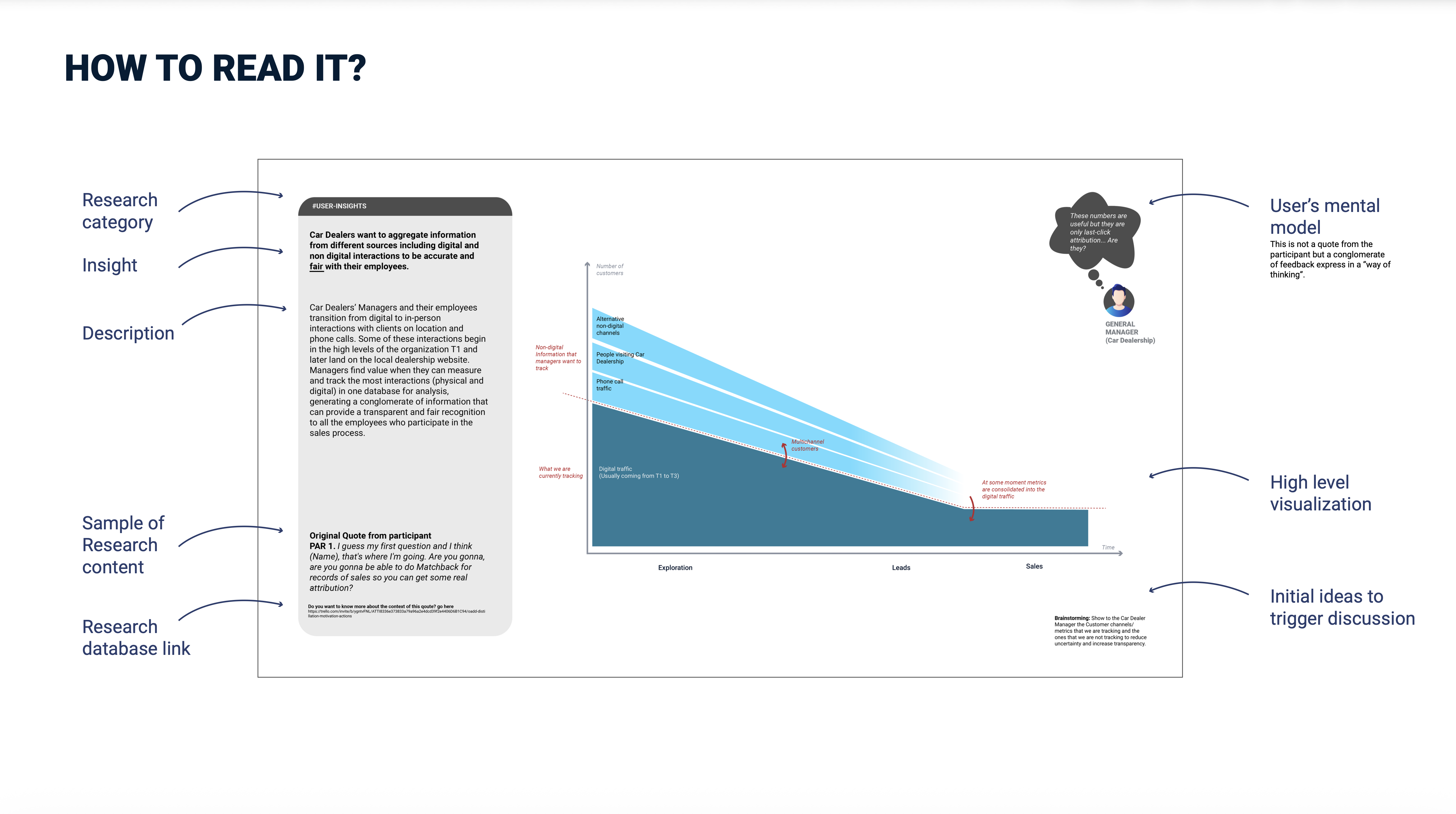

Each Research Byte was designed as a self-contained artifact: research category, key finding written as user mental model, contextual description, original participant quotes, visualization, discussion triggers, and database links for deep dives. Posted weekly to Atlas Newsfeed, shared in Slack (#product_strat, #general), and discussed biweekly in UXR Zoom meetings.

"Sometimes, the best insight is not the one that validates your idea; it is the one that challenges your assumptions and provides an alternative point of view that makes you feel uncomfortable."

— Research Bytes Philosophy

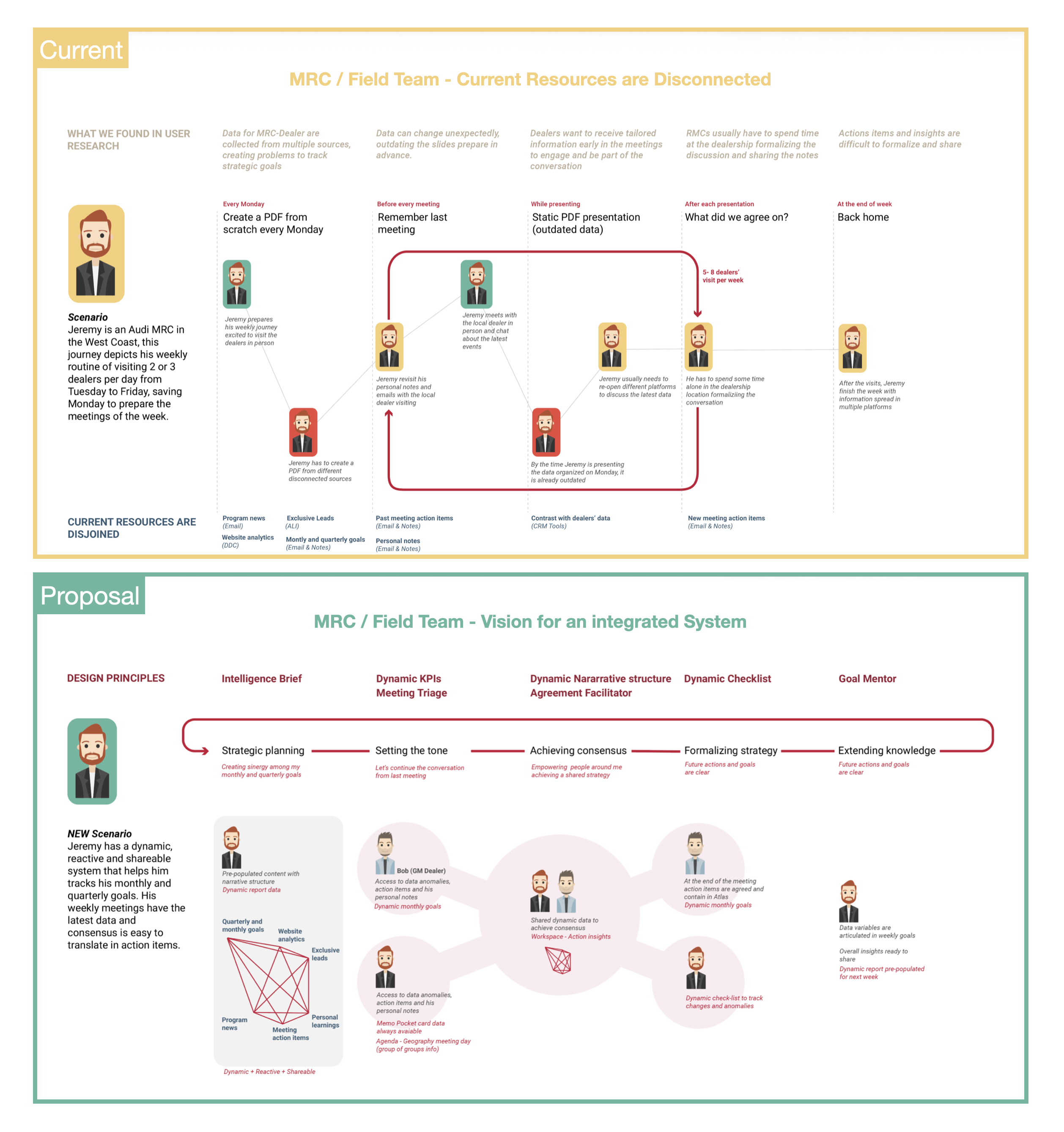

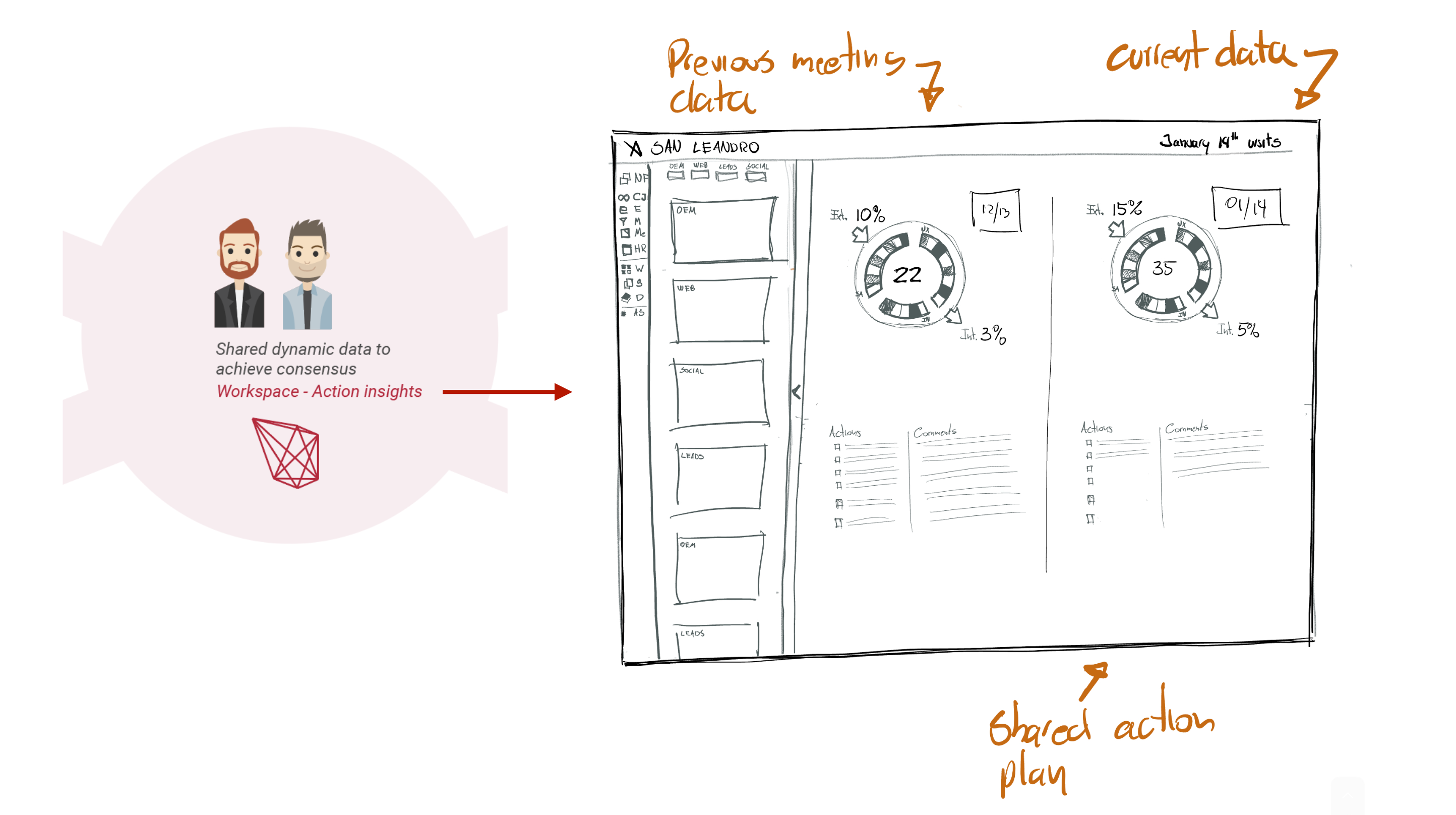

My work extended into designing a comprehensive engagement system — moving from individual features to interconnected infrastructure:

Guided workflows for three organizational processes: Advice Process (seeking input before decisions), Consent Process (ensuring stakeholder alignment), and Consensus Building (facilitating group agreements).

Structured pathways reducing friction in professional knowledge sharing — making the act of contributing feel safe and purposeful rather than risky and performative.

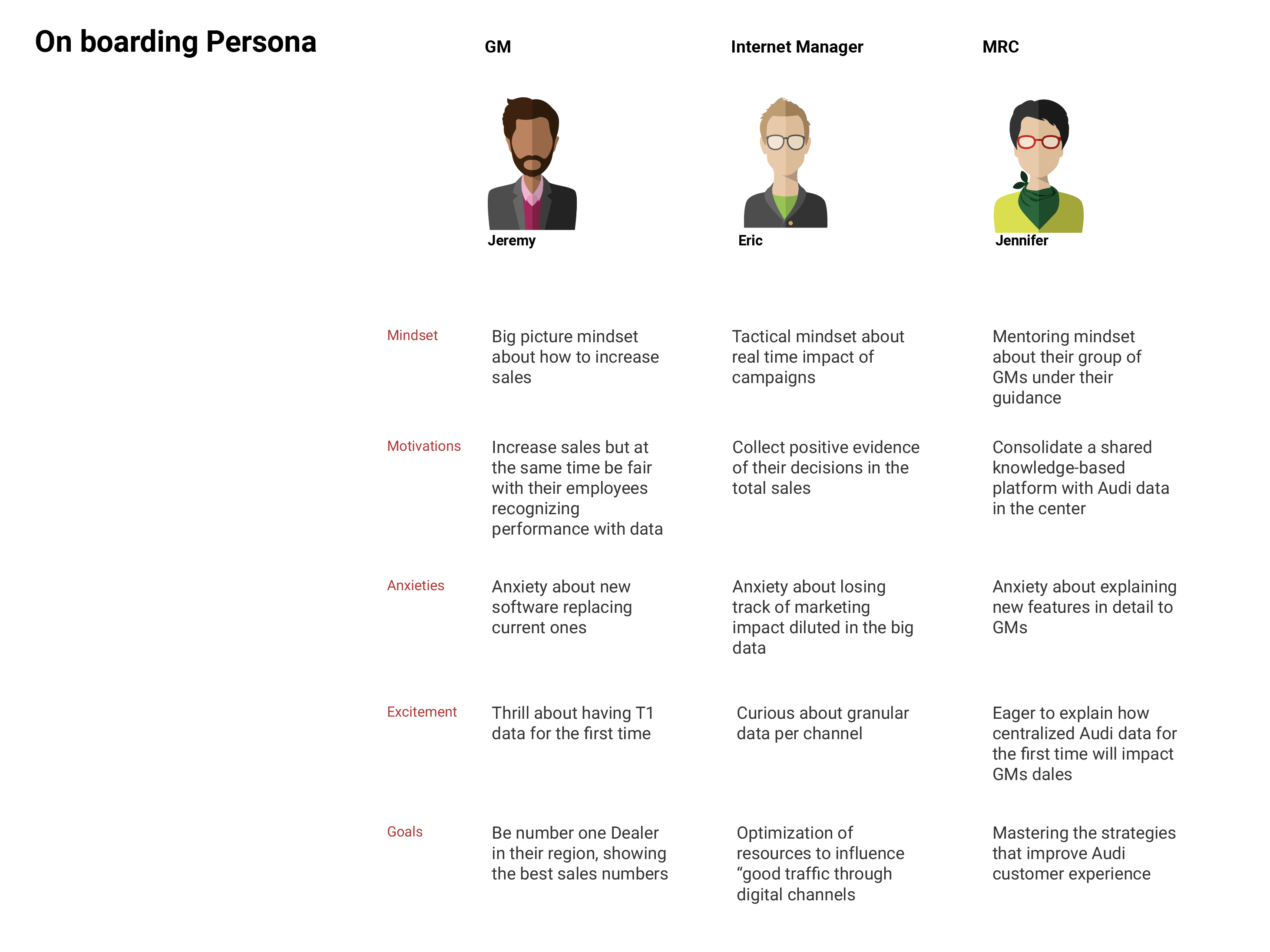

Three primary personas mapped across mindsets, motivations, anxieties, excitement triggers, and goals — not demographic profiles, but psychological landscapes for personalizing the experience.

Working at IXIS as their first dedicated UX researcher gave me unprecedented access to C-level strategic decisions and direct impact on product direction. The company's youth and agility meant I could experiment with research methodologies that would take years to pilot in established companies.

My work visa limited me to 12 months — a constraint that forced ruthless prioritization and shaped everything about how I approached the work.

In 12 months, I had to choose between perfect comprehensive research and rapid iterative insights. I chose velocity — and learned that well-timed partial insight beats perfectly-timed complete insight every time.

The QCT model, the Bright/Dark Side framework, Research Bytes as a template — these thinking tools don't disappear when the researcher leaves. They become part of how the organization thinks.

They're different. Limited resources mean every research initiative must justify itself immediately. But the direct access to strategic decisions compensates with an impact-per-effort ratio larger companies can't match.

Documentation is survival. When you leave, your frameworks must be self-explanatory. This constraint made me build systems — not reports — creating research infrastructure that sustains beyond the researcher.

See other projects demonstrating strategic research and systems thinking

← Back to All Projects